The illusion of precision in analytics dashboards

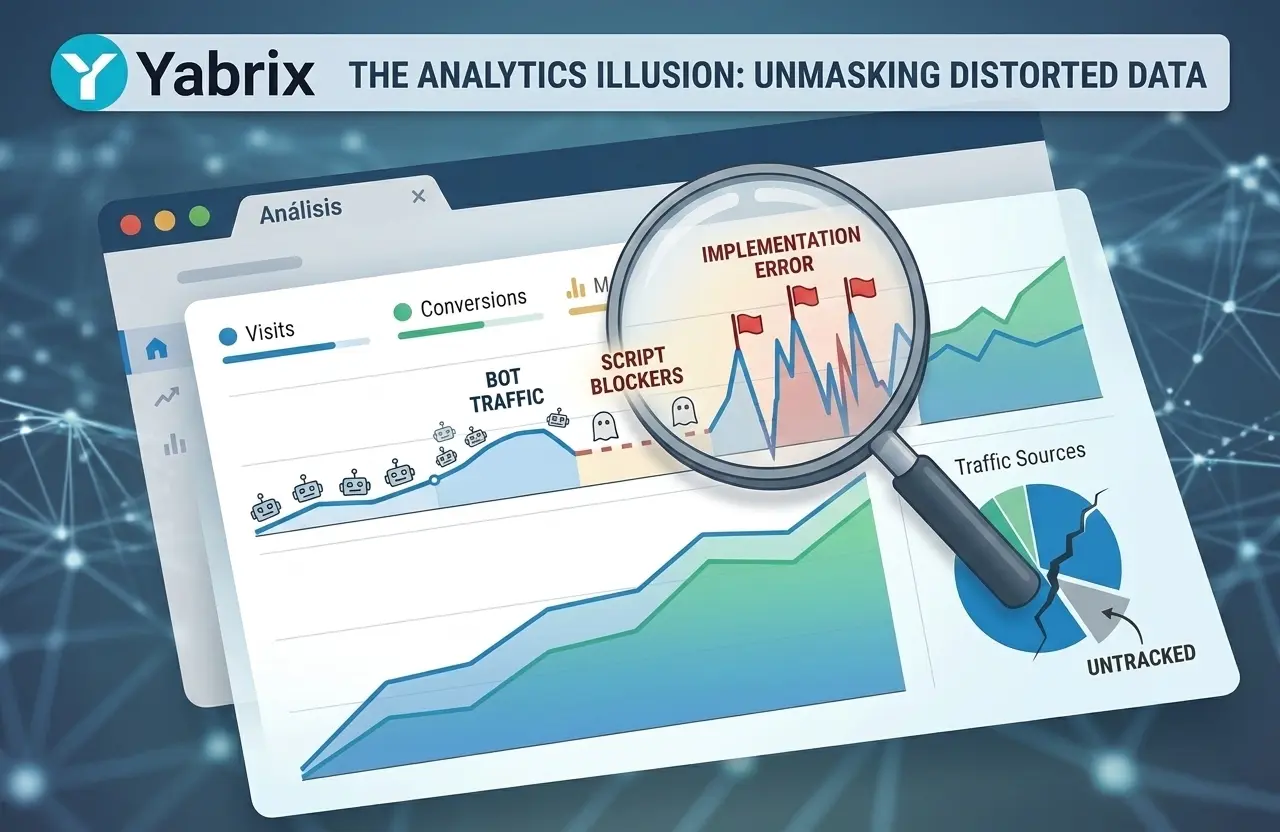

Analytics platforms present data with an appearance of mathematical precision. Dashboards display detailed figures about sessions, user interactions and conversions.

But this precision can be misleading.

Website measurement operates within complex environments influenced by multiple external factors. When these factors are not properly controlled, the data begins to accumulate noise and the metrics gradually diverge from actual user behavior.

Over time, these distortions can significantly affect how performance is interpreted.

Bots and automated traffic

One of the most significant sources of distortion in web analytics is automated traffic.

Years ago, bots were relatively easy to identify. Many used recognizable user agents or performed simple requests that could be filtered without much difficulty.

Today the situation is very different.

Modern automated systems can imitate human behavior with increasing accuracy. They can:

- execute JavaScript

- load complete web pages

- simulate scrolling

- trigger interaction events

- navigate across multiple pages

From the perspective of many analytics tools, this traffic appears legitimate.

Reports from cybersecurity companies such as Imperva have repeatedly shown that a significant portion of global internet traffic is generated by bots.

When this traffic is not properly filtered, key metrics such as sessions, engagement or time on page can become inflated.

Scripts blocked by browsers and extensions

Another important factor affecting analytics accuracy is the growing use of tracking protection mechanisms.

Modern browsers increasingly limit the ability of third-party scripts to track users.

For example:

- Safari uses Intelligent Tracking Prevention (ITP)

- Firefox implements Enhanced Tracking Protection

- browser extensions such as uBlock Origin block many analytics scripts entirely

When analytics scripts are prevented from loading, visits simply go unrecorded.

This means some analytics platforms may underestimate real traffic.

Measurement limitations in analytics platforms

Even widely used platforms such as Google Analytics can show discrepancies between reported metrics and actual website activity.

These differences can appear for several reasons:

- script blocking

- differences in how sessions are calculated

- sampling or processing delays

- limitations in event tracking implementation

In large or complex websites, these differences can become significant.

Misconfigured tracking events

Tracking events are another frequent source of inaccurate data.

As websites evolve, new tracking events are often added to measure additional interactions such as clicks, scroll depth, video plays or conversions.

Without careful auditing, this process can lead to issues like:

- duplicated events

- triggers firing without real user interaction

- inconsistent implementation across pages

- differences between testing and production environments

When this happens, analytics dashboards still display metrics, but the numbers no longer represent actual user behavior.

The signal-to-noise problem

A key concept in analytics is the relationship between signal and noise.

The signal represents genuine user behavior. Noise includes all interactions that do not correspond to real human activity or are incorrectly recorded.

Noise can originate from many sources:

- bots and scrapers

- automated monitoring systems

- artificial traffic

- analytics implementation errors

When noise levels increase, extracting meaningful insights from analytics data becomes more difficult.

Cookie-less analytics and privacy-first measurement

Another major shift affecting analytics is the transition toward privacy-focused measurement models.

Traditional analytics tools often rely on cookies to identify users and track sessions across visits. However, increasing privacy regulation and browser restrictions have significantly limited the effectiveness of cookie-based tracking.

Regulations such as the GDPR in Europe have reinforced the importance of minimizing personal data collection.

As a result, many modern analytics platforms are moving toward cookie-less architectures that measure traffic without relying on persistent identifiers.

Cookie-less analytics can simplify compliance while reducing dependence on tracking technologies that browsers increasingly restrict.

Toward signal-focused analytics

As digital environments grow more complex, the goal of analytics should not simply be to collect more data, but to improve the quality of the signals available.

Achieving this requires attention to several factors:

- advanced filtering of automated traffic

- well-designed tracking architecture

- consistent event implementation

- continuous validation of collected data

Reducing noise leads to a clearer understanding of how users actually interact with a website.

Analytics designed to reduce noise

In recent years, a new generation of analytics platforms has emerged with the goal of improving data reliability.

These tools prioritize:

- filtering automated traffic

- privacy-first architecture

- consistent measurement models

- real-time insights

Yabrix has been designed with this philosophy: focusing on signal rather than noise and providing real-time analytics with a privacy-first architecture.

The objective is simple: deliver data that more closely reflects what is actually happening on a website, allowing teams to make decisions based on more reliable information.